What are some strategies to become

more aware of our biases

while taking into consideration the

realities of time and resources?

As Katz & Dack note, simply using data does not necessarily interrupt biases, nor does it help us to recognize bias (37). Thus, we began our research by addressing the sub-question of our identified problem: How are biases recognized to begin with? We then identified the specific biases that we wanted to include in our study: confirmation; omission; vividness, authority; unconscious.

In her visual description of confirmation bias, Bergman (2016) notes that when we have formed an opinion, we seek out confirming data because our brain does not like identified patterns to be broken. In a simple experiment involving moving objects, Taluri et al (2018) demonstrated that a motion task selectively directed the attention of participants to incoming information that was in agreement. The News Literacy Project (2019) noted that our ability to choose our own media leads to confirmation bias. Heshmat (2019) notes that those motivated by confirmation bias stop gathering information when the evidence confirms their beliefs.

Chung, Kim & Sohn (2014) note that omission bias is a complex concept that is interwoven with other factors, so that it is difficult to identify a case of omission bias in a “pure” form. In theory it is defined as a tendency towards inaction rather than action. However, because it is ill-defined the role that omission bias might play in collaborative inquiry is also ill-defined. A study by Ritov and Baron (1992) found that omissions tended to be evaluated as neutral, regardless of their outcome, while commissions were evaluated as positive if the outcomes were better and negative if they were worse than the presumed outcome of inaction.

In her visual description of confirmation bias, Bergman (2016) notes that when we have formed an opinion, we seek out confirming data because our brain does not like identified patterns to be broken. In a simple experiment involving moving objects, Taluri et al (2018) demonstrated that a motion task selectively directed the attention of participants to incoming information that was in agreement. The News Literacy Project (2019) noted that our ability to choose our own media leads to confirmation bias. Heshmat (2019) notes that those motivated by confirmation bias stop gathering information when the evidence confirms their beliefs.

Chung, Kim & Sohn (2014) note that omission bias is a complex concept that is interwoven with other factors, so that it is difficult to identify a case of omission bias in a “pure” form. In theory it is defined as a tendency towards inaction rather than action. However, because it is ill-defined the role that omission bias might play in collaborative inquiry is also ill-defined. A study by Ritov and Baron (1992) found that omissions tended to be evaluated as neutral, regardless of their outcome, while commissions were evaluated as positive if the outcomes were better and negative if they were worse than the presumed outcome of inaction.

|

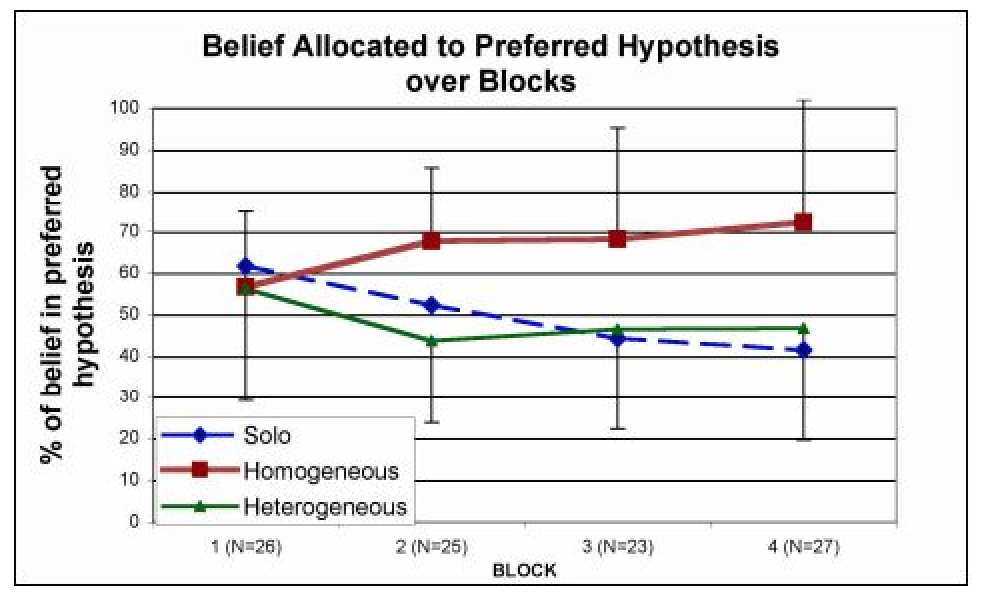

Using Collaborative Analysis of Competing Hypothesis Environment (CACHE), Billman et al. (n.d.) explored teams holding heterogeneous (diverse) beliefs with homogenous (similar) beliefs, finding reduction in confirmation bias in teams with heterogeneous beliefs. On the other hand, teams with homogenous beliefs accentuated initial bias despite exposure to corrective evidence. Their research methodology was supported by a computer-based version of Analysis of Competing Hypothesis (ACH) matrix which develops alternative possibilities (hypothesis), and assesses evidence in relation to each hypothesis, while seeking and valuing disconfirming evidence - as shown in Figure 1.

Drawing upon similar ideology, the British Psychological Society (n.d.) lists concepts such as engaging in inquiry, incorporating first-hand evidence, developing a culture of transparency, and promoting a diverse workforce as ways to help mitigate the impact of bias on decision making. |

This is similar to Karl Popper’s Falsification theory. As Turner (2019) notes, renowned philosopher of science, Sir Karl Raimund Popper, rejected classical inductivist views in favour of empirical falsification, concluding that the only way to test the validity of any theory is to prove it wrong. In Popper’s view, science, and indeed data, is all about falsification rather than confirmation.

Cook and Smallman (2007) experimented with Graphical Format and “Others’ Interpretations of bias-reduction” in intelligence analysis, finding that Graphical Format, in which data is presented using graphics rather than text, did effectively reduce bias. They argue that graphical format works because it plays to the “Availability Heuristic” in honoring the recognition-primed nature of decision-making. On the other hand, “Others’ Interpretations” did not reduce bias, and the authors surmise that participants simply focused less when entertaining the interpretations of others.

In their discussion of omission bias, Chung, Kim & Sohn (2014) argued that bias is impacted by the level of risk that a person is comfortable with. Schwitzer and Hershey (1991) agree, noting that the desire to maintain the status quo impacts bias. Lejuez et al (2002) identify the Balloon Analogue Risk Task (BART) as a tool that can help find balance between omission and action bias (an over-reliance on action as a way to compensate for omission). BART is a digital game that uses contingencies to simulate risk situations as a way to identify a propensity for risk taking. Psychology Today (n.d.) provides a Risk Taking Test that relies on the categories of sensation seeking, harm avoidance, conscientiousness, locus of control, comfort with ambiguity and reward orientation to provide participants the opportunity to reflect on their comfort level with risk.

Cook and Smallman (2007) experimented with Graphical Format and “Others’ Interpretations of bias-reduction” in intelligence analysis, finding that Graphical Format, in which data is presented using graphics rather than text, did effectively reduce bias. They argue that graphical format works because it plays to the “Availability Heuristic” in honoring the recognition-primed nature of decision-making. On the other hand, “Others’ Interpretations” did not reduce bias, and the authors surmise that participants simply focused less when entertaining the interpretations of others.

In their discussion of omission bias, Chung, Kim & Sohn (2014) argued that bias is impacted by the level of risk that a person is comfortable with. Schwitzer and Hershey (1991) agree, noting that the desire to maintain the status quo impacts bias. Lejuez et al (2002) identify the Balloon Analogue Risk Task (BART) as a tool that can help find balance between omission and action bias (an over-reliance on action as a way to compensate for omission). BART is a digital game that uses contingencies to simulate risk situations as a way to identify a propensity for risk taking. Psychology Today (n.d.) provides a Risk Taking Test that relies on the categories of sensation seeking, harm avoidance, conscientiousness, locus of control, comfort with ambiguity and reward orientation to provide participants the opportunity to reflect on their comfort level with risk.

|

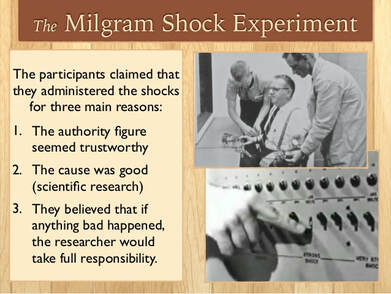

In a pioneering study of authority bias, Milgram (1963) noted that participants were instructed by the experimenter (an authority figure) to deliver shocks to the learner (in truth an actor). While no harmful shocks were ever delivered, of the forty participants, twenty-six followed the experimenter’s directions and delivered what they believed to be a maximum shock, causing what they believed was extreme pain to the learner. Hinnosaar and Hinnosaar (2012) compared authority bias in Western and Eastern European cultures to find that authority bias was significantly more prevalent in Eastern European cultures.

Katz (2013) explains that vividness bias occurs when we base our decisions on data that is loud and visible, even when that data may not be representative. Collins (1988) notes that the strongest vividness effect may be its impact on people’s theories about persuasion rather than on its persuasive impact. |

University of California Office of Diversity and Outreach (2019) states that everyone holds unconscious bias about various social and identity groups which stem from a tendency to organize social worlds by categorizing. Halvorson (2015) agrees, stating that it is important to recognize that all thinking results in bias.

When our collaborative inquiry team progressed to identifying strategies that we can implement to intentionally guard against bias, we discovered that many of the strategies were not specific to only one bias. Therefore, the remainder of our literature review focuses on strategies that address either specific bias or bias in general.

When our collaborative inquiry team progressed to identifying strategies that we can implement to intentionally guard against bias, we discovered that many of the strategies were not specific to only one bias. Therefore, the remainder of our literature review focuses on strategies that address either specific bias or bias in general.

|

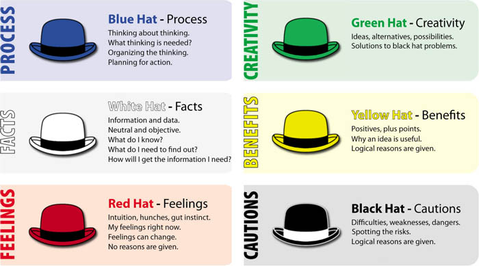

Kivunja (2015) examined recognized critical thinking authority Dr. Edward de Bono’s “Six Thinking Hats Model” as a framework to improve problem-solving skills in pedagogy. In a collaborative inquiry format, the premise is that asking team members to assume thinking from the six different perspectives helps to address bias.

Morals and values guide decisions, and are affected by the type and topic of collaborative inquiry in which we take part. The role that protected values (PV) assumes in bias is suggested by Baron & Spranca (1997). However, Chung, Kim & Sohn (2004) argue that PV is not a consistent predictor of omission bias in particular (see Baron & Spranca, 1997; Bartels, 2008; Tanner & Medin 2004). According to Chung, et al, one of the main reasons for conflicting results in regards to PV is whether the context of moral decisions demand action or inaction. |

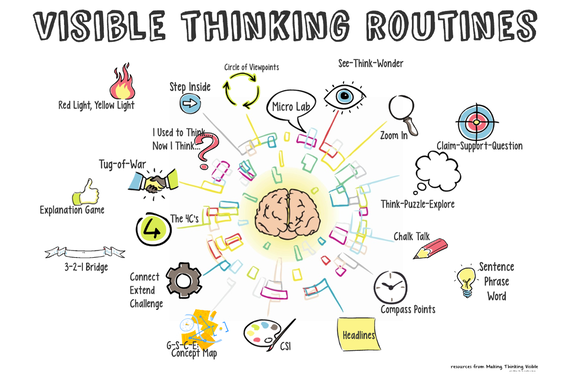

In a recent initiative, Microsoft relied on the concept of educating its employees on what unconscious bias by developing an eLearning course entitled “Unconscious Bias.” The Visible Thinking Routines model, developed by Project Zero of Harvard University’s Graduate School of Education (n.d.), is a research-based approach to teaching thinking dispositions. However, the Visible Thinking Routines was originally developed at Lemshaga Akademi in Sweden as part of the “Innovating with Intelligence” project, and focused on developing students’ thinking in such areas as truth-seeking, understanding, fairness, and imagination. The Swedish model of addressing bias has since expanded its focus to include emphasis on thinking through art and the role of cultural forces, and subsequently informed the development of Project Zero: Thinking Initiatives.

Psychologists from Harvard, the University of Virginia and University of Washington developed “Project Implicit.” The result is the Hidden Bias Tests, referred to as Implicit Association Tests (IAT) in the academic world, as one way to measure unconscious bias. Social scientists believe that children actually begin to acquire prejudices and stereotypes as early as the age of three. As they mature, bias is perpetuated by conformity with in-group attitudes and socialization by the culture at large. Acknowledging that biases are learned early and are counter to our commitment to just treatment is viewed as a first step in addressing bias. The organization Teaching Tolerance (n.d.) maintains an interactive website where team members can take the Hidden Bias pre-test to raise awareness of unconscious and confirmation bias.

Schraw and Dennison (1994) developed the Metacognitive Awareness Inventory (MAI) specifically for adult learners to bring awareness of metacognitive knowledge and metacognitive regulation (which they referred to as “Knowledge of Cognition Factor” and “Regulation of Cognition Factor” respectively). Although each of these entail some work and may not be practical if time and resources are a concern, the models provide some points to be mindful of when working as an individual within a group: knowledge of cognition (awareness of factors that influence your learning; know strategies to use in learning; choose appropriate strategy for specific learning situation); regulation of cognition (set goals; monitor and control learning; evaluate your regulation).

Schraw and Dennison (1994) developed the Metacognitive Awareness Inventory (MAI) specifically for adult learners to bring awareness of metacognitive knowledge and metacognitive regulation (which they referred to as “Knowledge of Cognition Factor” and “Regulation of Cognition Factor” respectively). Although each of these entail some work and may not be practical if time and resources are a concern, the models provide some points to be mindful of when working as an individual within a group: knowledge of cognition (awareness of factors that influence your learning; know strategies to use in learning; choose appropriate strategy for specific learning situation); regulation of cognition (set goals; monitor and control learning; evaluate your regulation).